This article explores a novel approach to scaling Large Language Models (LLMs) by leveraging gamified incentives within a decentralized, next-generation energy infrastructure. By treating energy generation and consumption as a dynamic game, we can optimize resource allocation and provide the massive computational power required for advanced AI development.

Gamification of Next-Generation Energy Infrastructure for LLM Scaling

The Gamification of Next-Generation Energy Infrastructure for LLM Scaling

The exponential growth of Large Language Models (LLMs) like GPT-4 and beyond is inextricably linked to an equally exponential demand for computational resources, primarily energy. Current energy infrastructure, largely reliant on centralized power grids and fossil fuels, is demonstrably insufficient and environmentally unsustainable for supporting this trajectory. This paper proposes a radical solution: the gamification of next-generation energy infrastructure, specifically decentralized, renewable energy networks, to provide the scalable and economically incentivized power necessary for future LLM development. This approach moves beyond simple energy trading and incorporates dynamic resource allocation, predictive modeling, and a sophisticated reward system designed to optimize both energy production and LLM training efficiency.

The Energy-AI Nexus and the Looming Constraint

The relationship between AI and energy consumption isn’t merely correlational; it’s fundamentally symbiotic. Training a single state-of-the-art LLM can consume energy equivalent to the lifetime emissions of several cars (Strubell et al., 2019). This places a significant strain on existing energy grids and raises concerns about the environmental impact of AI development. The current reliance on centralized power generation, often fueled by carbon-intensive sources, directly contradicts the sustainability goals underpinning many AI applications. Furthermore, the latency and reliability of centralized grids pose a bottleneck for real-time LLM inference and continuous training.

Theoretical Foundations: From Nash Equilibrium to Agent-Based Modeling

Our proposed solution draws heavily from several key theoretical frameworks. Firstly, Nash Equilibrium, a core concept in game theory, provides the foundation for designing incentive structures that encourage optimal energy production and consumption. In a Nash Equilibrium, no participant can benefit by unilaterally changing their strategy, implying a stable and efficient system. We envision a system where energy producers (solar farms, wind turbines, micro-hydro generators) and consumers (data centers, industrial facilities, residential users) are agents interacting within a dynamic game. The reward function, meticulously designed, incentivizes behaviors that maximize overall system efficiency and LLM training throughput.

Secondly, Agent-Based Modeling (ABM) allows us to simulate complex interactions between numerous decentralized actors. ABM is particularly well-suited for modeling energy systems because it can account for heterogeneity in energy sources, consumption patterns, and individual behaviors. By simulating various scenarios and reward structures, we can optimize the game’s parameters to achieve desired outcomes, such as peak shaving, demand response, and proactive grid stabilization. This contrasts with traditional grid management, which often relies on aggregate statistics and reactive adjustments.

Thirdly, the principles of Thermodynamic Efficiency, specifically the Second Law of Thermodynamics, are paramount. Any energy system, including those powering LLMs, is subject to entropy increase. Gamification, when coupled with advanced energy storage and smart grid technologies, can minimize entropy generation by optimizing energy flows and reducing waste. For instance, dynamic pricing based on real-time grid conditions can incentivize consumers to shift energy-intensive LLM training tasks to periods of peak renewable energy availability, minimizing reliance on backup power sources.

Technical Mechanisms: Decentralized Energy Networks and Federated Learning

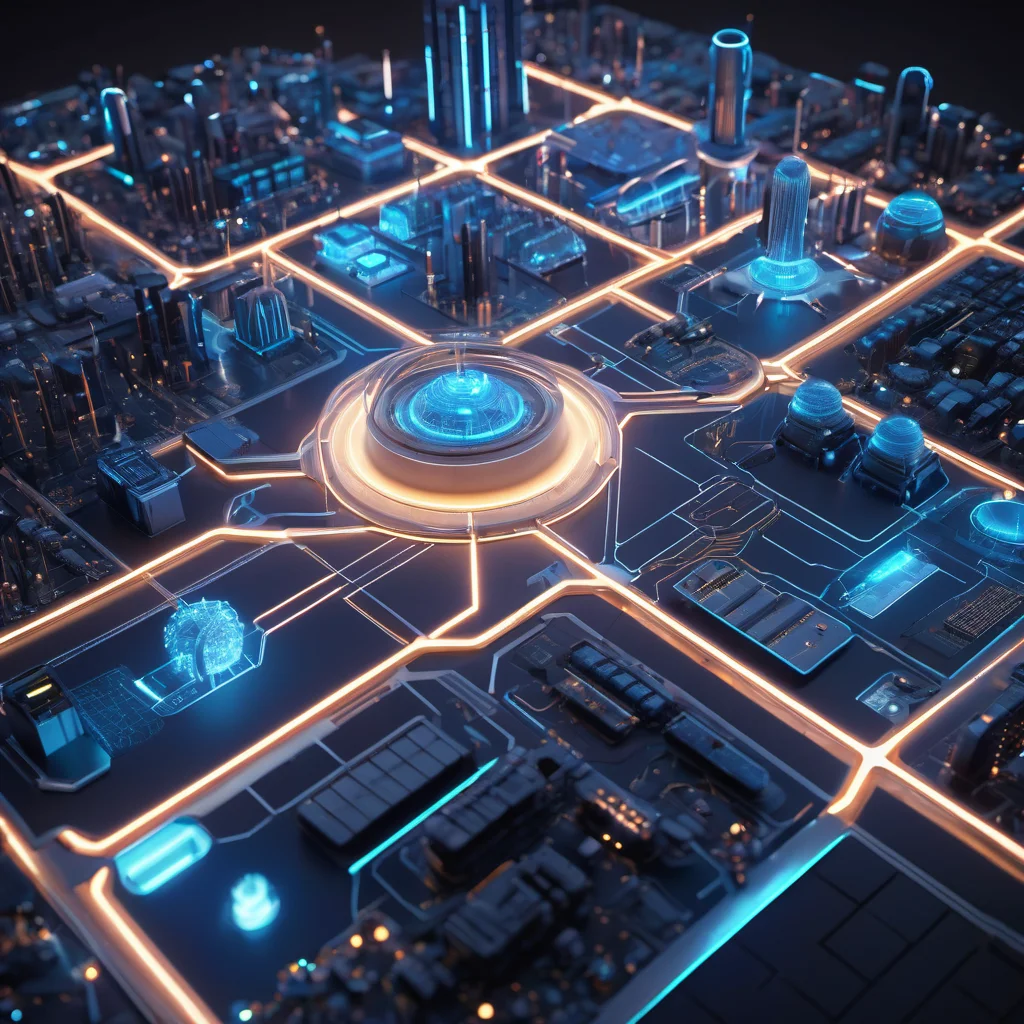

The proposed infrastructure comprises several key components. It begins with a decentralized network of renewable energy sources – solar, wind, hydro, geothermal – connected via a blockchain-based energy trading platform. This platform facilitates peer-to-peer energy transactions and ensures transparency and security. Crucially, the blockchain isn’t merely a ledger; it’s the backbone of the gamification engine, recording energy production, consumption, and reward distribution.

LLM training is then integrated into this ecosystem. Data centers, acting as significant energy consumers, are incentivized to participate in the game by offering computational resources in exchange for energy credits. These credits can be used to offset their energy costs or traded on the blockchain. Furthermore, Federated Learning (FL) becomes integral. Instead of centralizing training data, LLMs are trained locally on distributed datasets within the energy network. This reduces data transfer costs and enhances privacy, while simultaneously leveraging the computational power of the decentralized infrastructure. The FL process itself is incentivized; participants who contribute high-quality data and computational resources receive proportionally higher rewards.

The Reward System: Dynamic Pricing and Reputation Scores

The core of the gamification lies in the reward system. Dynamic pricing, driven by real-time grid conditions and LLM training demands, is the primary mechanism. Energy is cheaper when renewable sources are abundant and demand is low, and more expensive during peak hours or periods of low renewable generation. This incentivizes both producers to maximize output during favorable conditions and consumers to shift their energy usage accordingly.

Beyond pricing, a reputation system is implemented. Participants earn reputation points based on their reliability, efficiency, and contribution to the overall system. High reputation scores unlock access to premium energy rates, preferential access to computational resources, and increased influence within the network’s governance structure. Malicious behavior, such as energy theft or data manipulation, results in penalties and a reduction in reputation.

Future Outlook: 2030s and 2040s

By the 2030s, we anticipate the widespread adoption of this gamified energy infrastructure for LLM scaling. Blockchain technology will have matured, enabling more complex and secure energy trading protocols. ABM simulations will become increasingly sophisticated, allowing for real-time optimization of the game’s parameters. Quantum-enhanced optimization algorithms could be employed to further refine reward functions and resource allocation. We might see the emergence of specialized ‘AI-Energy Farms’ – facilities designed specifically to provide computational power and energy for LLM training, operating entirely within this gamified ecosystem.

In the 2040s, the lines between energy generation, computation, and AI will blur even further. Self-optimizing, AI-powered microgrids will become commonplace, dynamically adjusting energy production and consumption based on predicted LLM training workloads. The concept of ‘Energy-as-a-Service’ will dominate, with LLM developers paying for computational resources on a usage-based model, directly incentivizing energy efficiency and renewable energy adoption. The gamification engine itself may evolve into a self-learning AI, continuously optimizing the system based on real-world data and emerging AI training techniques.

Conclusion

The gamification of next-generation energy infrastructure offers a compelling pathway to sustainably scale LLMs and unlock the full potential of artificial intelligence. By leveraging game theory, agent-based modeling, and decentralized technologies, we can create a dynamic and efficient ecosystem that benefits both AI developers and the environment. This approach represents a paradigm shift in how we think about energy and computation, paving the way for a future where AI and sustainability are inextricably linked.

This article was generated with the assistance of Google Gemini.