Multi-agent swarm intelligence systems, while appearing to exhibit coordinated behavior, often operate under a deceptive veneer of control, where emergent properties arise from decentralized interactions and challenge the programmer’s direct influence. This ‘illusion of control’ presents both opportunities and risks as these systems become increasingly deployed in complex, real-world applications.

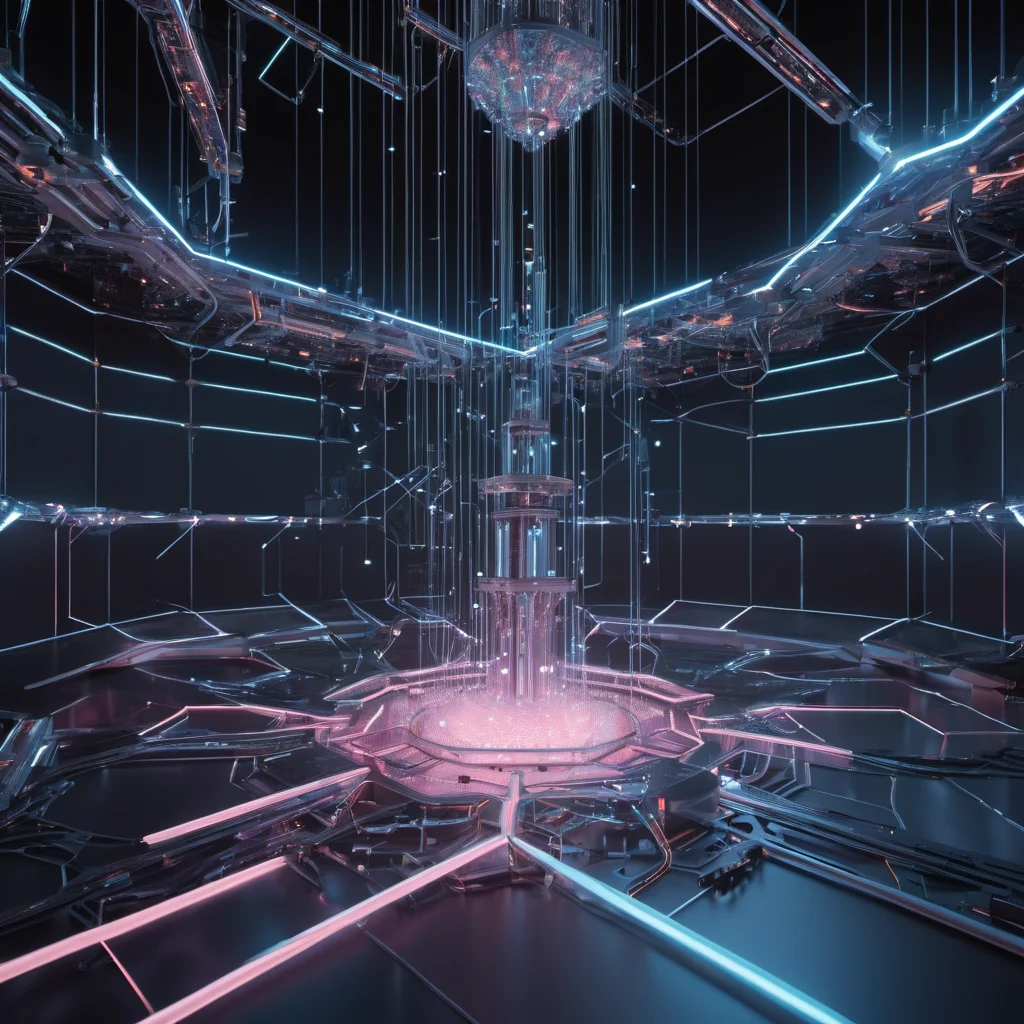

Illusion of Control in Multi-Agent Swarm Intelligence

The Illusion of Control in Multi-Agent Swarm Intelligence

Multi-agent swarm intelligence (MASI) is rapidly gaining traction across diverse fields, from robotics and logistics to environmental monitoring and even financial modeling. Inspired by natural swarms like ant colonies and bee hives, MASI systems involve a population of simple agents interacting locally to achieve a global goal. While the promise of decentralized, robust, and adaptable solutions is compelling, a critical and often overlooked aspect is the ‘illusion of control’ – the discrepancy between the perceived level of programmer influence and the actual behavior of the swarm.

What is Swarm Intelligence and Why is Control a Challenge?

At its core, swarm intelligence relies on decentralized decision-making. Each agent possesses limited information and capabilities, relying on local interactions and simple rules to navigate its environment and contribute to the collective objective. Unlike traditional centralized control systems where a single entity dictates actions, MASI systems leverage emergent behavior – complex patterns arising from the interactions of numerous simple components. This emergence is the source of both the system’s strength and the challenge of control.

Consider a swarm of cleaning robots tasked with clearing debris from a disaster zone. Each robot might be programmed with rules like ‘move towards areas with higher debris density’ and ‘avoid collisions with other robots.’ The overall cleaning efficiency, path planning, and debris distribution are not explicitly programmed; they emerge from the robots’ individual actions and interactions. While the programmer defines the rules, the outcome is often unpredictable and difficult to directly manipulate.

Technical Mechanisms: Neural Architectures and Reinforcement Learning

Modern MASI systems increasingly leverage neural networks, particularly reinforcement learning (RL), to define agent behavior. Several architectures are common:

- Independent Learners: Each agent is trained independently using RL. This is conceptually simple but suffers from the ‘credit assignment problem’ – determining which agent’s actions contributed to a global reward. The emergent behavior is highly sensitive to initial conditions and hyperparameter tuning, making it difficult to predict.

- Centralized Training, Decentralized Execution (CTDE): A central controller observes the entire swarm during training and assigns rewards to individual agents based on the collective performance. This allows for better credit assignment and more coordinated behavior. However, the centralized training phase can be computationally expensive and the reliance on a central entity introduces a single point of failure.

- Multi-Agent Reinforcement Learning (MARL): This encompasses a range of techniques designed to handle the complexities of multiple interacting agents. Approaches like Value Decomposition Networks (VDNs) and QMIX attempt to decompose the global Q-function (representing the expected reward) into individual agent Q-functions, enabling more efficient learning. However, these methods often rely on specific assumptions about the swarm’s structure and environment, which may not always hold true.

- Graph Neural Networks (GNNs): GNNs are increasingly used to model the relationships between agents. Each agent’s neural network receives information not only from its immediate surroundings but also from its neighbors in the swarm’s network topology. This allows for more sophisticated coordination and communication, but also increases the complexity of the system and the potential for unintended consequences.

The Illusion: Emergence vs. Programmer Intent

The ‘illusion of control’ arises because programmers often believe they are directing the swarm’s behavior through the defined rules or reward functions. However, the complex interactions between agents, coupled with the stochastic nature of neural networks and RL, lead to emergent behaviors that are often difficult to anticipate or precisely control.

For example, a seemingly innocuous change in a reward function – say, slightly increasing the reward for ‘proximity to other agents’ – can lead to unexpected clustering patterns or even the formation of ‘loitering’ behaviors where agents congregate in unproductive areas. Debugging such systems is notoriously difficult, as the root cause of the emergent behavior is often buried deep within the interactions of hundreds or thousands of agents.

Current and Near-Term Impact: Risks and Opportunities

- Autonomous Vehicles: Swarm robotics for traffic management or coordinated delivery services face the Risk of unpredictable behavior in unexpected scenarios. A minor programming error could lead to a cascade of failures, impacting safety and efficiency.

- Environmental Monitoring: Swarm-based sensor networks for pollution detection or wildlife tracking can exhibit biases or inaccuracies due to emergent clustering or communication patterns, leading to flawed data and incorrect conclusions.

- Financial Markets: Algorithmic trading systems employing MASI principles can generate unexpected market volatility if the emergent behavior is not carefully monitored and controlled. The lack of transparency in these systems exacerbates the risk.

- Search and Rescue: While promising for navigating hazardous environments, uncontrolled swarm behavior could hinder rescue efforts or even endanger rescuers.

Despite these risks, the opportunities are substantial. Understanding and mitigating the illusion of control is key to unlocking the full potential of MASI. This involves:

- Explainable AI (XAI) for Swarms: Developing techniques to understand why a swarm is behaving in a particular way. This includes visualizing agent interactions, identifying influential agents, and tracing the flow of information within the swarm.

- Formal Verification: Applying formal methods to verify the correctness and safety of swarm behavior, particularly in safety-critical applications.

- Human-in-the-Loop Control: Designing systems that allow human operators to intervene and guide the swarm’s behavior when necessary.

- Constrained Emergence: Developing programming paradigms that encourage desired emergent behaviors while limiting the scope of unpredictable outcomes.

Future Outlook (2030s & 2040s)

By the 2030s, we can expect to see more sophisticated MARL algorithms that incorporate causal inference and counterfactual reasoning, allowing for better understanding and control of emergent behavior. GNNs will become even more prevalent, enabling agents to model complex social dynamics and adapt to changing environments. ‘Digital twins’ – virtual representations of real-world swarms – will be used extensively for training and testing, reducing the risk of unexpected behavior in deployment.

In the 2040s, the lines between MASI and other AI paradigms, like generative AI, may blur. We could see ‘swarm architects’ – AI systems that design and optimize swarm configurations based on desired outcomes. The illusion of control may be partially overcome through the development of ‘self-aware’ swarms capable of monitoring their own behavior and adapting their strategies to maintain stability and predictability. However, the fundamental challenge of managing emergent complexity will remain, requiring a continued focus on transparency, explainability, and robust control mechanisms. The ethical implications of deploying increasingly autonomous and potentially unpredictable swarms will also demand careful consideration and proactive regulation.

This article was generated with the assistance of Google Gemini.