Autonomous robotic logistics faces a critical bottleneck: the lack of sufficient, labeled data to train robust and reliable systems. Innovative techniques like Synthetic Data generation, transfer learning, and few-shot learning are emerging as crucial solutions to unlock the full potential of these robots.

Overcoming Data Scarcity in Autonomous Robotic Logistics

Overcoming Data Scarcity in Autonomous Robotic Logistics

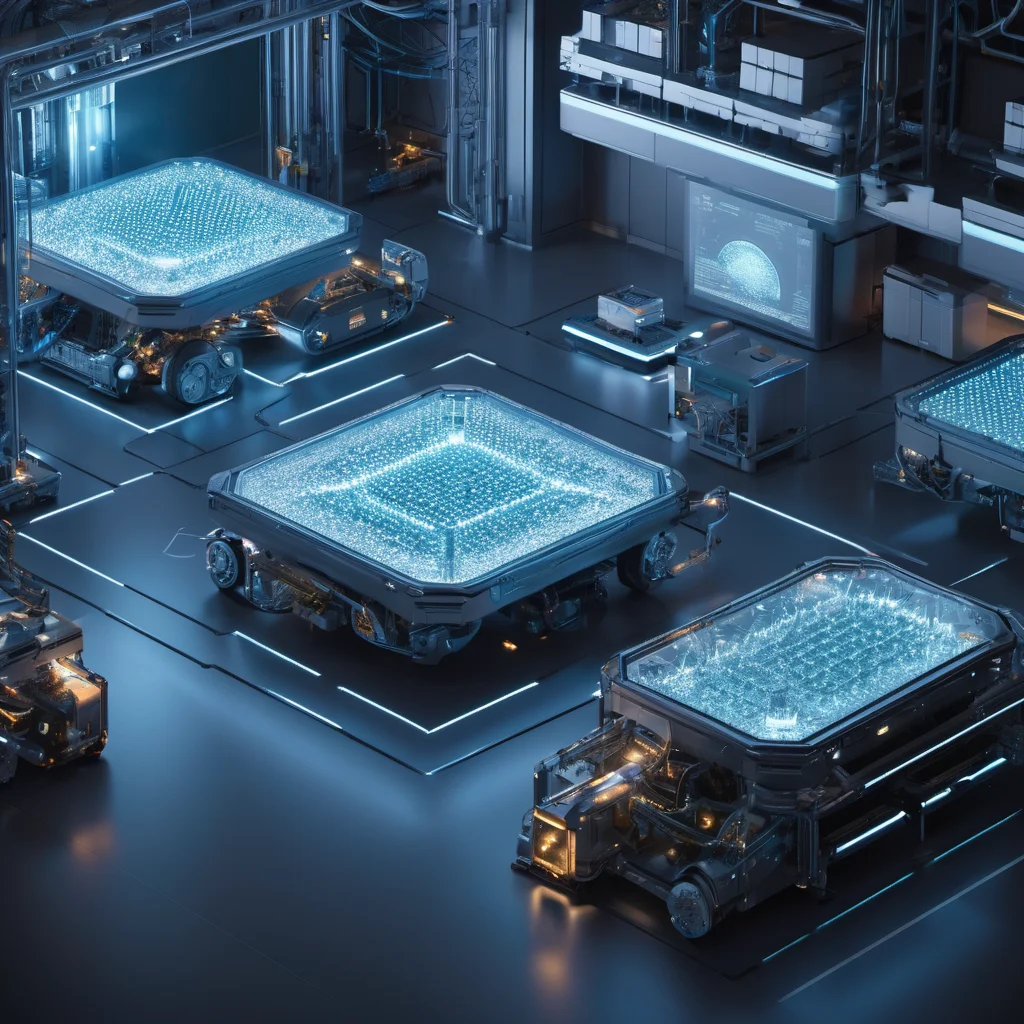

The promise of autonomous robotic logistics – warehouses humming with efficient, self-navigating robots, delivery drones seamlessly integrating into urban landscapes – is tantalizing. However, realizing this vision hinges on a significant challenge: data scarcity. Traditional machine learning, particularly deep learning, thrives on massive datasets. Training robots to navigate complex, dynamic environments, identify objects, and react safely requires a volume of labeled data that is often impractical and prohibitively expensive to acquire.

This article explores the nature of the data scarcity problem in autonomous robotic logistics, examines current and near-term solutions, and considers the future trajectory of these technologies.

The Data Scarcity Problem: Why It’s Unique to Robotics

The problem isn’t simply about a lack of data; it’s about the type of data needed. Consider a warehouse robot: it needs to learn to identify pallets, boxes, forklifts, and human workers, all while navigating changing layouts, varying lighting conditions, and unexpected obstacles. This requires:

- High Dimensionality: Robotic environments are rich in sensory information (camera images, LiDAR point clouds, depth data). Processing this data requires significant computational resources and, crucially, labeled examples.

- Long-Tail Distributions: Rare events – a spilled box, a blocked aisle, a malfunctioning conveyor belt – are critical for safety and reliability, but they occur infrequently, leading to a skewed data distribution. Robots must be prepared for these edge cases, which are often underrepresented in training data.

- Safety-Criticality: Incorrect classifications or navigation decisions can have serious consequences (damage to goods, injury to personnel). This demands extremely high accuracy, which is difficult to achieve with limited data.

- Dynamic Environments: Warehouse layouts and delivery routes change frequently, rendering previously collected data obsolete and requiring continuous retraining.

Current and Near-Term Solutions

Several techniques are emerging to address this data scarcity challenge. These can be broadly categorized into synthetic data generation, transfer learning, and few-shot learning, often used in combination.

1. Synthetic Data Generation:

Creating simulated environments is becoming increasingly sophisticated. Game engines like Unity and Unreal Engine, coupled with physics simulators, allow for the generation of vast amounts of labeled data. This data can be tailored to represent specific scenarios, including rare events.

- Technical Mechanisms: Synthetic data generation relies on procedural content generation (PCG) and physics-based rendering. PCG algorithms create variations in warehouse layouts, object placement, and lighting conditions. Physics engines simulate realistic robot dynamics and interactions with the environment. The key is domain randomization, where parameters in the simulation are varied widely to force the robot to learn robust features that generalize to the real world. Generative Adversarial Networks (GANs) are also being explored to create photorealistic synthetic images.

- Limitations: The “reality gap” – the difference between the simulation and the real world – remains a challenge. Careful calibration and validation are required to ensure that robots trained on synthetic data perform well in real-world deployments.

2. Transfer Learning:

Transfer learning leverages knowledge gained from training on a large, related dataset to improve performance on a smaller, target dataset. For example, a robot trained to recognize objects in a general indoor environment can be fine-tuned on a smaller dataset of warehouse-specific objects.

- Technical Mechanisms: Typically, a pre-trained convolutional neural network (CNN) – often trained on ImageNet – is used as a feature extractor. The lower layers of the CNN, which learn general image features (edges, textures), are frozen, while the higher layers are trained on the target dataset. This significantly reduces the amount of data required for training.

- Benefits: Faster training, improved generalization, and better performance with limited data.

3. Few-Shot Learning:

Few-shot learning aims to train models that can generalize from only a handful of examples. Meta-learning, a subfield of few-shot learning, trains models to learn how to learn, enabling them to quickly adapt to new tasks with minimal data.

- Technical Mechanisms: Meta-learning algorithms, such as Model-Agnostic Meta-Learning (MAML), optimize the model’s initial parameters so that it can quickly adapt to new tasks with a few gradient steps. Siamese networks and triplet networks learn similarity metrics, allowing them to classify new objects based on their similarity to a few labeled examples.

- Challenges: Few-shot learning is computationally intensive and requires careful design of the meta-training process.

4. Active Learning:

Active learning intelligently selects the most informative data points for labeling, maximizing the efficiency of data annotation. Instead of randomly selecting data for labeling, the algorithm chooses examples where it is most uncertain.

Future Outlook (2030s & 2040s)

By the 2030s, synthetic data generation will be significantly more advanced, incorporating physics-based simulations that accurately model material properties, friction, and lighting conditions. GANs will generate increasingly realistic and diverse synthetic datasets, blurring the lines between simulated and real-world data.

In the 2040s, we can anticipate the emergence of self-supervised learning techniques that require even less labeled data. Robots will learn from their interactions with the environment, generating their own labels and refining their understanding of the world. Digital twins – virtual replicas of physical environments – will become commonplace, providing a continuous stream of data for training and validation. Furthermore, federated learning, where robots collaboratively train models without sharing raw data, will become essential for protecting privacy and enabling decentralized learning.

Conclusion

Overcoming data scarcity is paramount for the successful deployment of autonomous robotic logistics. The combination of synthetic data generation, transfer learning, few-shot learning, and active learning offers a promising path forward. As these techniques mature and computational resources continue to increase, the vision of fully autonomous and efficient robotic logistics will move closer to reality, transforming industries and reshaping the future of work.

This article was generated with the assistance of Google Gemini.