The burgeoning demand for Large Language Models (LLMs) is straining existing energy infrastructure, leading to unexpected failures and inefficiencies in data centers. These failures highlight the critical need for proactive, adaptive energy solutions specifically designed for the unique power profiles of LLM training and inference.

Real-World Case Studies of Failure in Next-Generation Energy Infrastructure for LLM Scaling

Real-World Case Studies of Failure in Next-Generation Energy Infrastructure for LLM Scaling

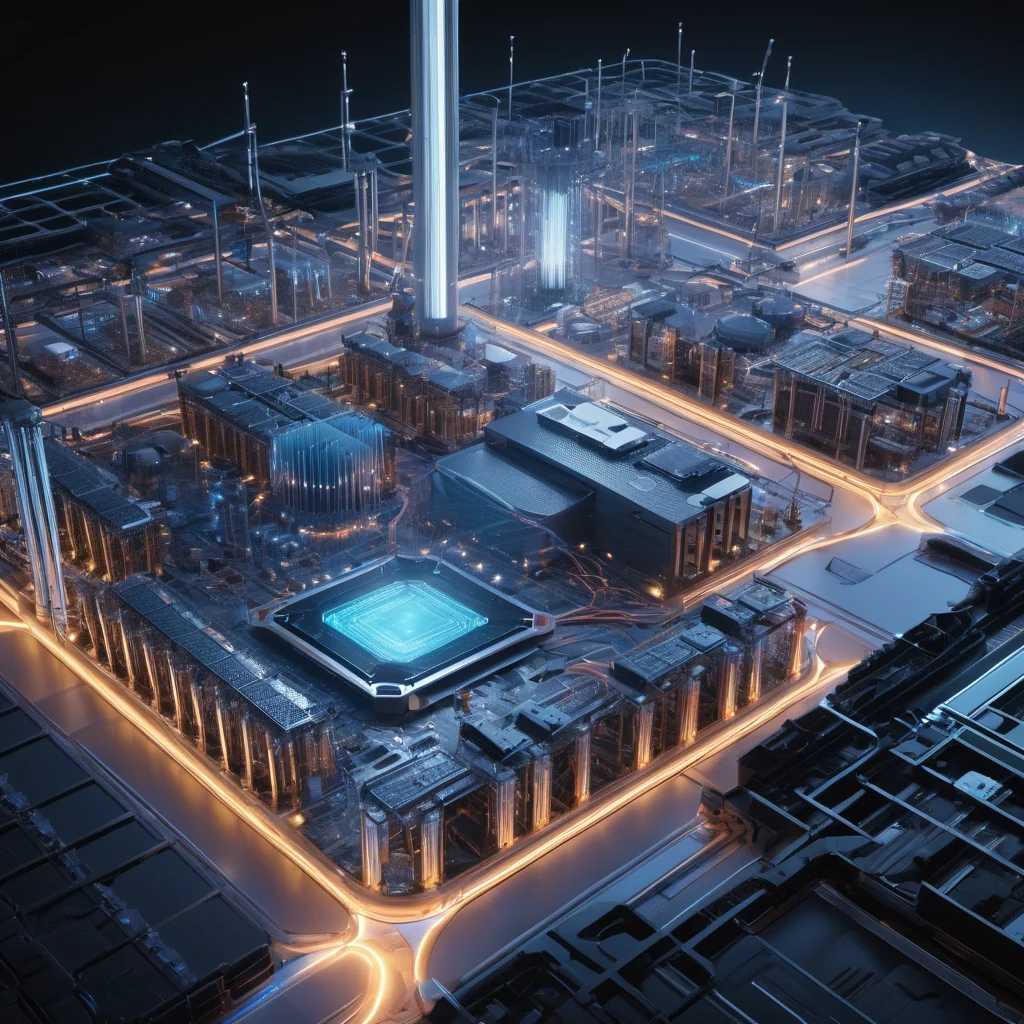

The rapid advancement of Large Language Models (LLMs) like GPT-4, Gemini, and Llama 2 has ushered in an era of unprecedented AI capabilities. However, this progress comes at a significant cost: immense energy consumption. Training and deploying these models requires massive computational power, driving a surge in demand for data centers and, consequently, electricity. While significant investment is being made in next-generation energy infrastructure to support this growth, real-world deployments are already revealing vulnerabilities and failures that threaten LLM scaling and highlight the urgent need for more robust and adaptive solutions.

The Energy Footprint of LLMs: A Growing Crisis

LLMs are not simply computationally intensive; they exhibit unique power profiles. Unlike traditional server workloads, LLM training involves periods of extremely high power draw interspersed with periods of relative quiescence. Inference, while less demanding than training, still requires substantial and consistent power. This dynamic load profile presents a significant challenge for existing power grids and data center infrastructure, which are often designed for more predictable and consistent workloads.

Case Studies of Failure & Near-Misses

While many data center power issues are proprietary and rarely publicly detailed, several incidents and near-misses have emerged, pointing to systemic vulnerabilities. Here are a few illustrative examples:

- The Texas Grid Strain (2023): While not solely attributable to LLM training, the severe winter storm in Texas exposed the fragility of the state’s power grid. Several large data centers, crucial for AI development and deployment, experienced brownouts and curtailments, significantly impacting LLM training schedules and inference services. The lack of grid redundancy and the reliance on intermittent renewable sources exacerbated the situation. This incident underscored the Risk of relying on geographically constrained and vulnerable power sources for critical AI infrastructure.

- European Data Center Curtailments (2022-2023): The energy crisis in Europe, triggered by geopolitical events, led to mandatory electricity curtailments for industrial consumers, including data centers. Several major AI research labs and cloud providers were forced to throttle back LLM training and inference operations to conserve energy. This demonstrated the vulnerability of AI infrastructure to global energy market fluctuations and the potential for significant operational disruptions.

- Silicon Valley Microgrid Instability (Ongoing): The rapid expansion of AI development in Silicon Valley has put significant strain on local microgrids. While these microgrids offer some resilience, they are increasingly susceptible to overload during peak LLM training periods. Reports of voltage sags and localized power outages are becoming more frequent, impacting not only AI infrastructure but also other critical services in the region. The lack of sufficient grid upgrades to accommodate the exponential growth in power demand is a major concern.

- Unexplained Data Center Failures (Confidential Reports): Numerous confidential reports within the cloud provider sector detail unexpected equipment failures in data centers hosting LLM workloads. These failures are often linked to thermal overload, exceeding the design limits of power distribution units (PDUs) and uninterruptible power supplies (UPS) due to the concentrated power draw. The rapid shift to increasingly dense server configurations to maximize LLM training throughput has outpaced the ability of cooling and power infrastructure to adapt.

Technical Mechanisms: Why LLMs are Different

Understanding these failures requires a grasp of the underlying technical mechanisms. LLMs rely on transformer architectures, which are inherently computationally intensive. Here’s a breakdown:

- Transformer Architecture: Transformers utilize self-attention mechanisms, requiring massive matrix multiplications. These operations are typically offloaded to specialized hardware like GPUs or TPUs. The sheer scale of these matrices (billions of parameters) necessitates extremely high memory bandwidth and processing power.

- Distributed Training: Training LLMs is almost always distributed across multiple GPUs or TPUs. This introduces communication overhead and synchronization challenges, further increasing power consumption. The network interconnects between these devices become a critical bottleneck.

- Mixed Precision Training: To mitigate power consumption, techniques like mixed-precision training (using both FP16 and FP32 floating-point formats) are employed. However, this introduces complexity in hardware and software management, potentially leading to instability if not implemented correctly.

- Dynamic Power Profiles: As mentioned earlier, LLM workloads exhibit highly dynamic power profiles. Training phases can involve near-constant peak power draw, while inference can be more variable. Traditional data center power infrastructure, often designed for average load, struggles to handle these fluctuations.

Current Mitigation Strategies & Their Limitations

Several strategies are being employed to address these challenges, but each has limitations:

- Increased Renewable Energy Adoption: While crucial for long-term sustainability, renewable energy sources are intermittent and require robust energy storage solutions.

- Improved Data Center Cooling: Advanced cooling techniques like liquid cooling are being implemented, but they are expensive and complex.

- Power Usage Effectiveness (PUE) Optimization: Reducing PUE (the ratio of total data center power to IT equipment power) is a priority, but gains are diminishing as data centers approach theoretical limits.

- Dynamic Voltage and Frequency Scaling (DVFS): Adjusting voltage and frequency based on workload demands can save power, but it can also impact performance and stability.

Future Outlook (2030s & 2040s)

Looking ahead, the energy demands of LLMs are only going to intensify. By the 2030s, we can expect:

- Ubiquitous AI-Specific Microgrids: Data centers will increasingly rely on dedicated microgrids powered by a mix of renewable energy, energy storage, and potentially even small modular nuclear reactors (SMRs).

- Advanced Power Management Systems: AI-aware power management systems will dynamically allocate power based on real-time workload demands and grid conditions.

- Neuromorphic Computing: The emergence of neuromorphic computing architectures, which mimic the human brain’s energy efficiency, could significantly reduce the power footprint of AI.

In the 2040s, we may see:

- Decentralized AI Infrastructure: AI training and inference could become more decentralized, leveraging edge computing and distributed data centers to reduce reliance on centralized power grids.

- Fusion Power Integration: If fusion power becomes a reality, it could provide a virtually limitless source of clean energy for AI infrastructure.

- Quantum Computing Impact: While still nascent, quantum computing could potentially revolutionize AI algorithms, drastically reducing their computational complexity and energy requirements.

Conclusion

The failures and near-misses we’re witnessing today are not isolated incidents; they are symptoms of a systemic problem. Scaling LLMs requires a fundamental rethinking of energy infrastructure, moving beyond incremental improvements to embrace innovative technologies and proactive, adaptive solutions. Ignoring these challenges will stifle AI innovation and create significant economic and operational risks.

This article was generated with the assistance of Google Gemini.